Beyond the chatbot: How model context protocol (MCP) unlocks your data silos

Artificial intelligence has mastered the art of conversation, but it often fails at the science of your specific business. The model context protocol (MCP) is the missing link that turns generic LLMs into specialized agents capable of real work.

Between 1998 and 2023, the way we retrieved information remained largely unchanged: We typed keywords into a search bar and sifted through links. Then came ChatGPT, and suddenly, the world expected instant, synthesized answers.

But for enterprises, a critical gap remains. While large language models (LLMs) possess vast "world knowledge," they are blind to your private data. They don’t know your customer’s tracking number, your internal wiki structure, or your live inventory levels. To bridge this gap, companies have been building expensive, brittle integrations.

That is about to change. A new standard, the model context protocol (MCP), is emerging as the "USB-C for AI," promising to standardize how AI agents connect to your data.

The challenge: The spaghetti code problem

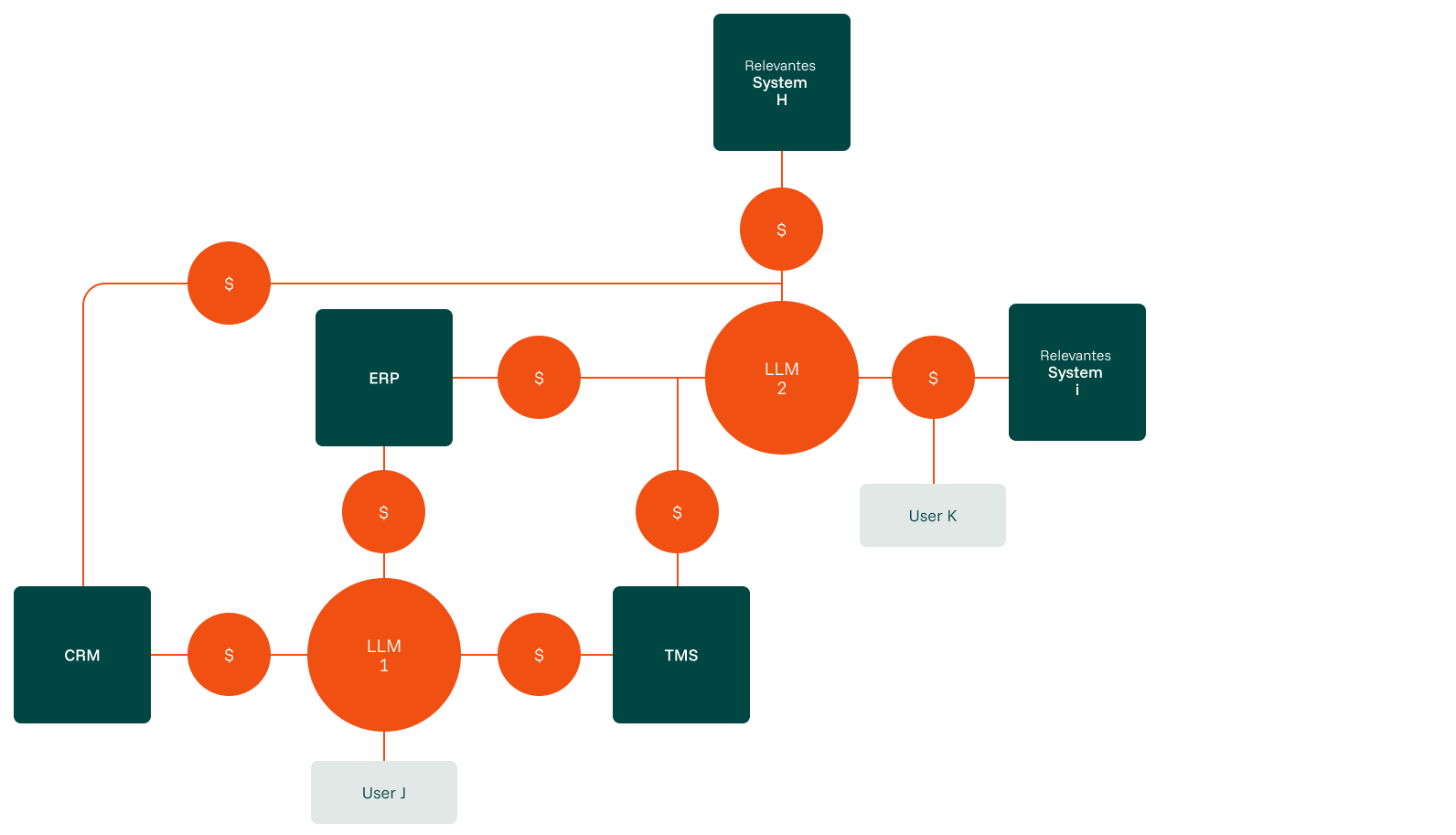

Currently, connecting an LLM to proprietary data is a bespoke engineering task. If you want your AI to access a CRM, a customer support system, and an ERP, you must build an expensive custom pipeline for each.

The problem compounds exponentially. If you introduce a different AI model tomorrow (switching from GPT-4 to Claude, for example) or add a new internal tool, you have to rebuild those bridges. This leads to a spaghetti architecture where costs and complexity scale non-linearly with every new use case.

This friction is why many "AI pilots" remain pilots. They are simply too expensive to maintain and scale.

The solution: The USB-C for AI

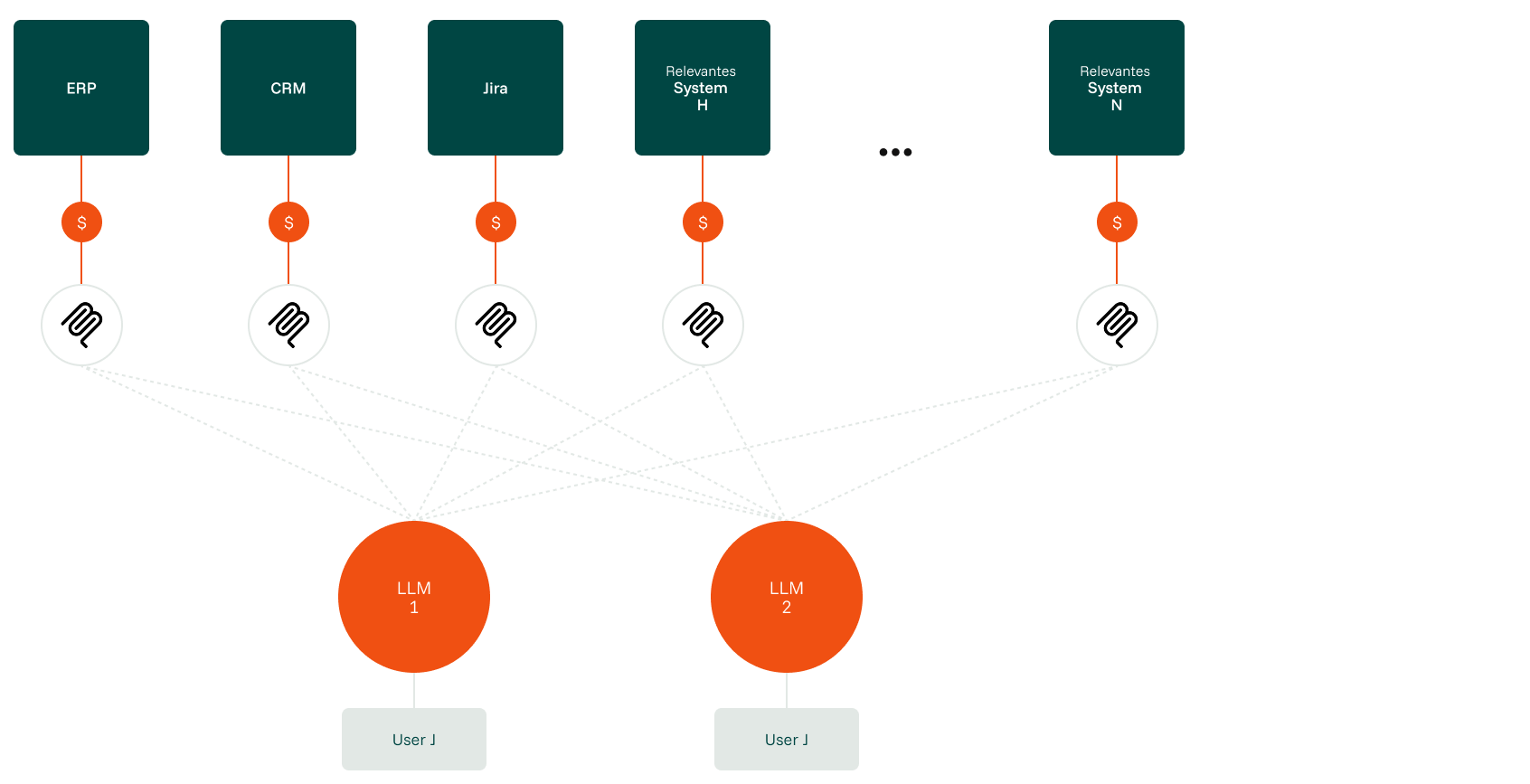

Think of MCP as a universal adapter. Instead of hard coding a connection between a specific AI and a specific service, you build an MCP server.

This server acts as a standardized host that tells any AI client: "Here are the resources I have (data), and here are the tools I can use (functions)."

-

Plug & play: Once you expose your data via MCP, it doesn't matter which LLM client you use. You build the connection once, and it works everywhere.

-

Bidirectional access: It allows the AI not just to "read" data (like a document), but to "act" on it - triggering database queries or API calls safely.

This shifts the integration complexity from exponential to linear. You no longer build a new pipeline for every project; you build a reusable utility layer for your entire organization.

From chatbots to agents: The business value

Why does this matter for decision-makers? Because it radically simplifies AI agents –systems that can autonomously perceive, decide, and act.

1. Revolutionizing customer experience (B2C or B2B)

Consider a live broadcast operations scenario. When a production manager asks, "What's the status of tonight's soccer game feed?," a standard LLM has no other option than to hallucinate or give generic advice. An MCP-enabled agent connects directly to the playout system, the rights management database, and the contribution scheduling tool via MCP servers.

It identifies the specific match feed, checks the satellite booking status ("Uplink confirmed, backup fiber path pending"), cross-references with the rights clearance for all territories, and flags that the Danish territory license expires at midnight. The production manager gets one consolidated answer instead of checking three separate systems.

It identifies the specific tracking number, queries the live status ("Exception: Stuck at Cologne West"), may be able to trigger 2nd/3rd level support autonomously, and instantly informs the customer.

2. Empowering the workforce (internal)

Internally, MCP turns knowledge management into an active assistant. Imagine an employee asking for all available information on an internal topic.

The AI can query past messages in the company’s chat system, cross-reference it with documentation pages in a wiki, and autonomously evaluate all results to refine the search further – seamlessly bridging two completely different software silos.

Where to start: Finding your low-hanging fruit

Implementing MCP is an architectural shift, but you don’t need to overhaul your entire IT landscape overnight. We recommend starting with processes that score high on the automatability scale:

Look for tasks your team performs daily that involve manually moving data between systems.

MCP thrives where data is structured (databases, spreadsheets) rather than unstructured (messy PDFs).

Start with internal tools or "read-only" customer scenarios to minimize risk while proving ROI.

Avoid processes involving personal data or intellectual property in early pilots. Where sensitive data is involved, MCP servers can enforce strict access boundaries, but the simplest start is where compliance isn't a blocker at all.

Conclusion: The end of static AI

The era of static, text-based AI is ending. We are entering the age of connected AI agents that live alongside your data.

While bespoke RAG (retrieval-augmented generation) systems aren't dead, the future belongs to standardized protocols that lower the cost of curiosity. By adopting MCP, you aren't just building a chatbot; you are readying your APIs for a future where your software talks to itself, freeing your people to do what they do best.